17.12.2025

Explainable AI (XAI): A Practical Guide to Transparent, Trustworthy AI

Artificial intelligence is now everywhere: from healthcare diagnostics to fraud detection. But as AI systems grow more powerful, they also become harder to understand. Why does the model say yes to one customer and no to another? Why did an algorithm flag a transaction as fraud? And how can organisations prove their AI is fair, safe, and compliant?

Artificial intelligence is now everywhere: from healthcare diagnostics to fraud detection. But as AI systems grow more powerful, they also become harder to understand. Why does the model say yes to one customer and no to another? Why did an algorithm flag a transaction as fraud? And how can organisations prove their AI is fair, safe, and compliant?

That’s where Explainable AI (XAI) comes in. If you want to understand how AI makes decisions, how to build trust, and how to stay compliant with emerging regulations, keep reading. This guide breaks down XAI in a clear and actionable way.

What is explainable AI?

Explainable Artificial Intelligence (XAI) refers to the broad set of approaches and research efforts aimed at making the behaviour and decisions of AI systems understandable to humans. Rather than being a single method, XAI focuses on improving transparency by providing meaningful explanations of how and why AI models produce specific outcomes.

Because many AI systems rely on heuristic methods and highly complex models, full interpretability is often not achievable. XAI therefore typically delivers partial, context-dependent explanations that vary by model type, data, and use case. More advanced and accurate models, such as deep learning systems, are generally harder to interpret than simpler models like decision trees.

Today, XAI is one of the fastest-growing areas of AI research, driven by regulatory requirements, ethical considerations, and the need to build trust, accountability, and human oversight in AI-driven decision-making.

Methods of Explainable AI (XAI)

Explainable AI (XAI) methods are techniques designed to make the decisions and behaviour of AI models understandable to humans. Rather than offering a single, universal solution, XAI approaches address different dimensions of transparency depending on the model type, the decision context, and the intended audience. Broadly, XAI methods can be grouped into three categories: intrinsically interpretable models, post-hoc explanation techniques, and visualization-based approaches. Each category supports different goals, such as model debugging, regulatory compliance, or building user trust.

Intrinsically interpretable models

Intrinsically interpretable models are designed to be transparent by their very nature. Their internal logic is directly accessible and understandable without the need for additional explanation layers. Common examples include linear and logistic regression, decision trees, and rule-based systems.

In these models, the decision-making process is explicit. For instance, a decision tree represents logic through a sequence of conditional branches, clearly showing how specific input features lead to a final outcome. Similarly, regression models express the influence of each feature through coefficients, making it possible to see both the direction and magnitude of their impact on predictions.

While this class of models offers a high level of explainability and is well suited to auditability and governance, it typically comes with a trade-off. To remain interpretable, these models limit complexity, which can reduce performance in tasks involving highly non-linear relationships or unstructured data such as images or free text.

Post-hoc explanation techniques

Post-hoc explanation techniques are applied after a model has been trained and are commonly used to explain complex, high-performing models that are otherwise opaque. Rather than revealing the model’s internal mechanics, these methods approximate its behaviour to produce human-readable explanations.

These techniques can operate at different levels:

- Local explanations, which clarify why a model produced a specific decision for an individual input.

- Global explanations, which aim to describe the model’s overall behaviour across many predictions.

A common strategy in post-hoc explainability is to approximate the complex model with a simpler, interpretable representation—either around a single prediction or across a broader input space. Other approaches distribute credit among input features to estimate how much each contributed to the final outcome. While highly flexible and model-agnostic, post-hoc explanations are inherently approximate and may not fully capture all interactions within the original model, particularly at a global level.

Visualization-based approaches

Visualization-based XAI methods rely on graphical representations to illustrate how models process and respond to input data. These approaches are especially prevalent in deep learning, where traditional forms of interpretability are limited.

In computer vision, visualization techniques can highlight regions of an image that most strongly influenced a prediction, helping observers understand what the model “attended to”. In natural language processing, attention-based visualizations indicate which words or phrases were most influential in generating a particular output. By translating abstract computations into visual cues, these methods make complex model behaviour more accessible to both technical and non-technical audiences.

However, visualization-based explanations require careful interpretation. Visual prominence does not always equate to causal importance, and without validation, such explanations can create a misleading sense of understanding. As a result, they are most effective when used alongside other explainability methods.

Together, these three categories illustrate that explainability is not a single feature but a spectrum of techniques. In practice, organizations often combine multiple XAI methods to address different transparency needs: using interpretable models where possible, post-hoc explanations where necessary, and visualizations to support analysis and communication.

Why explainable AI matters?

Explainable AI plays a central role in making automated decision-making transparent, accountable, and trustworthy. Using XAI methods we can not only make a decision using an AI model, but also indicate which part of the input contributed to the decision, allowing us to deliver consistent models that are easier for human verification.

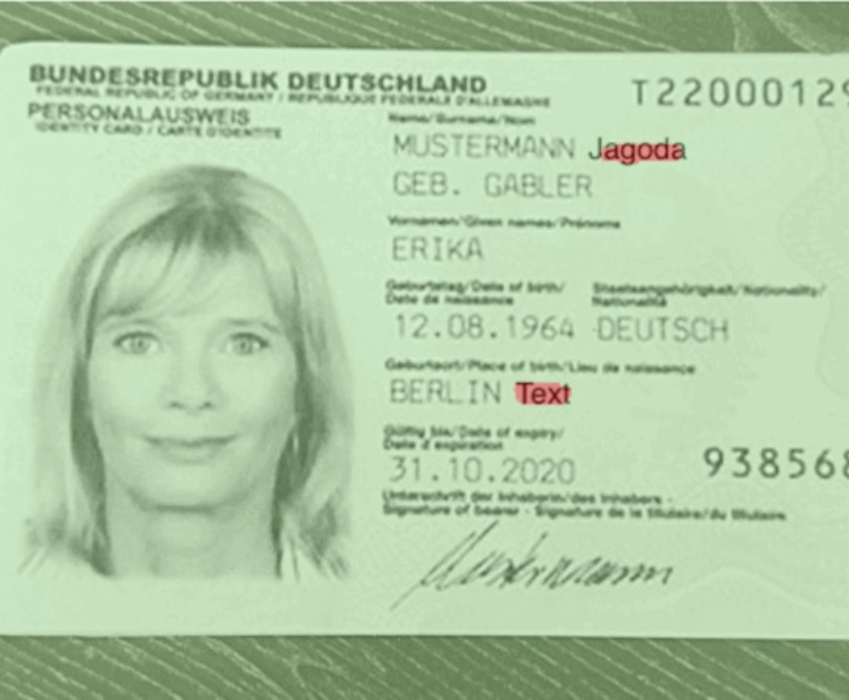

The model does not only indicate that the document has been manipulated; based on a detailed, layer-by-layer analysis, it is able to trace back its decision and explain why this conclusion was reached, highlighting the specific modification that was detected.

As organisations deploy AI in increasingly sensitive areas like finance, healthcare, public services, cybersecurity, criminal justice, regulators expect systems to justify their outputs in a way that people can understand. Explainability ensures AI decisions are not only accurate but also fair, compliant, and defensible. It provides the clarity needed for oversight, helps mitigate risk, and strengthens user trust.

Below are the key regulatory and operational reasons that make XAI essential.

Transparency and Accountability

Under the EU AI Act, deployers of high-risk AI systems are required to provide affected individuals with clear and meaningful explanations of decisions based on AI outputs that produce legal or similarly significant effects (as set out in Article 86). This complements other transparency and documentation obligations in the Act. In sectors such as banking, lending, or insurance, institutions must be able to explain decisions like loan approvals, fraud alerts, or credit scoring.

Explainable AI enables teams to trace why a decision was made, identify which factors influenced an outcome, and present this information in a human-understandable format. This transparency supports compliance and makes it easier for auditors, customers, and regulators to review and validate automated processes.

Bias Detection and Mitigation

Laws and ethical standards demand that AI systems treat individuals fairly and without discrimination. Yet models can unintentionally learn biases hidden in training data.

XAI makes it possible to uncover these biases by exposing how the model reasons and how different attributes influence predictions. With visibility into these patterns, organisations can detect unintended discrimination, adjust the model, retrain datasets, and demonstrate compliance with anti-discrimination requirements.

Legal and Ethical Compliance

Regulations such as the General Data Protection Regulation (GDPR) give individuals the right to an explanation for decisions made by automated systems. This means organisations must be prepared to show why a decision was reached and ensure the explanation is accessible to non-experts.

Explainability makes compliance possible by producing understandable outputs that individuals can challenge, validate, or review. It strengthens due process and ensures automated decisions remain anchored in legal and ethical standards.

Trust and Adoption

AI systems are more likely to be trusted and adopted when their behaviour is understandable. Users, customers, and stakeholders want to know why a model behaves the way it does, especially when decisions affect access to healthcare, loans, education, or legal outcomes.

XAI strengthens credibility by making model operations clear, reducing uncertainty, and helping people feel confident that the system is behaving ethically and logically. Trust is a critical factor in regulatory approval and long-term adoption.

Auditing and Monitoring

Regulators require organisations to continuously monitor AI systems for fairness, stability, and compliance. Explainable AI supports this by generating traceable evidence of how decisions are made and by documenting model behaviour throughout its lifecycle.

XAI techniques create audit-ready insights, making it easier to inspect or investigate outcomes, identify anomalies, and demonstrate that the model operates within established boundaries.

Enhancing Model Governance

As organisations build comprehensive AI governance frameworks, explainability becomes a foundational principle. Strong governance requires clear standards for development, evaluation, deployment, and monitoring.

Explainable AI strengthens these frameworks by ensuring:

- Decisions can be traced and justified

- Risks are identified early

- Models align with ethical and regulatory expectations

- Teams have the tools to intervene when issues arise

Explainability enhances organisational control over AI systems, reduces operational risk, and ensures models remain aligned with legal, ethical, and business standards.

How explainable AI works

Explainable AI works through two main approaches: building inherently interpretable models or adding interpretability to complex models after they are built.

1. Self-interpretable models

A self-interpretable model offers full transparency. Techniques such as decision trees or linear regression make the decision process visible by exposing the exact rules, weights, or relationships used to generate predictions. Although these models may be less interpretable than advanced post-hoc architectures, their interpretability is crucial for accountability, regulatory compliance, and situations where understanding “why” matters as much as the final result.

2. Post-hoc explanations

A post-hoc model refers to an AI system whose internal logic is hidden or too complex to interpret. Models like deep neural networks can deliver high accuracy, but the path from input to output is not easily understood. This opacity makes it harder to evaluate fairness, identify errors, or build user trust, especially in high-stakes environments where decisions must be justified.

Comparing AI and XAI

Explainable AI encompasses a set of approaches that aim to support the following properties, depending on the model, context, and use case:

- Interpretability – enabling humans to better understand model outputs, including what a returned value represents and how it may change in response to different inputs.

- Transparency – improving the ability to trace decision-making processes and relate outcomes to specific input factors, helping identify which data characteristics influenced a result.

- Auditability – supporting the examination, review, and documentation of model behaviour over time, allowing internal teams, auditors, or regulators to assess how decisions were produced and whether the system operates as intended.

- Fairness – facilitating the analysis of model outcomes to detect and mitigate potential bias, helping reduce the risk of systematic disadvantage affecting individuals or groups.

- Human controllability – enabling meaningful human oversight by supporting the ability to question, intervene in, or override AI-driven decisions where appropriate.

Explainable AI techniques

XAI techniques are commonly divided into global and local methods, depending on whether they explain overall model behaviour or individual predictions.

Global explainability techniques

These methods explain the model as a whole, showing general rules, feature effects, or global behaviour.

- Permutation Importance – Show how changes in one or two input features affect the model’s predictions on average, helping to understand whether relationships are linear, monotonic, or more complex.

- Partial Dependence Plots (PDP) – Show how changes in one or two input features affect the model’s predictions on average, helping to understand whether relationships are linear, monotonic, or more complex.

- Morris Sensitivity Analysis – Evaluates the influence of input variables by changing one feature at a time, making it useful for identifying which inputs matter most in large or complex models.

- Accumulated Local Effects (ALE) – Explains how features influence predictions on average while accounting for feature interactions, reducing misleading effects that can occur when features are correlated.

- Global Interpretation via Recursive Partitioning (GIRP) – Creates an interpretable decision structure that summarises the most important rules and patterns learned by a complex model at a global level.

Local explainability techniques

These methods explain individual predictions, showing how a model reasoned for a specific data point.

- LIME (Local Interpretable Model-Agnostic Explanations) – Approximates a complex model locally by fitting a simple, interpretable model around a single prediction to highlight the most influential features.

- Anchors – Produces clear, rule-based explanations that identify the key conditions which, when met, reliably lead the model to a specific prediction.

- Contrastive Explanation Method (CEM) – Explains predictions by identifying what features must be present to support an outcome and what features must be absent to change it.

- Counterfactual Instances – Show how minimal changes to input features could lead to a different prediction, helping users understand what factors drive decision changes.

- Integrated Gradients – Assigns importance scores to input features by analysing how changes in inputs affect the model’s output, commonly used in deep learning models.

- Protodash – Identifies representative data points that most strongly influence the model, helping explain predictions through influential examples.

Techniques that work globally and locally

These methods can explain entire models or single predictions, depending on how they’re applied.

- SHAP (SHapley Additive exPlanations) – Uses game-theoretic principles to assign each feature a contribution value, providing consistent explanations for both global trends and individual predictions.

- Scalable Bayesian Rule Lists – Learns human-readable IF–THEN rules from data, allowing explanations that are interpretable at both the model and prediction level.

- Tree Surrogates – Train an interpretable decision tree to approximate a complex model, making it possible to analyse both overall logic and specific decisions.

- Explainable Boosting Machine (EBM) – Combines high predictive accuracy with full interpretability by modelling each feature’s effect separately, while still capturing important interactions.

Each method has strengths and limitations, and post-hoc explanations must be implemented carefully to avoid misleading users or exposing vulnerabilities.

Benefits of explainable AI

These advantages arise specifically from explainability and cannot be achieved by post-hoc (black-box) AI alone.

Decision contestability

Explainable AI enables individuals and organisations to challenge and review automated decisions. Without explanations, meaningful contestability is impossible.

Actionable feedback from AI systems

XAI can reveal not only what happened, but what would need to change to achieve a different outcome. This makes AI outputs more useful and actionable for users.

Stronger AI governance and accountability

Explainability provides the foundation for governance frameworks by making responsibility traceable. It allows organisations to assign ownership, document decisions, and enforce ethical standards.

Safer deployment in high-risk environments

In regulated or safety-critical domains, explainability enables pre-deployment validation and ongoing monitoring, reducing the likelihood of harmful or non-compliant behaviour.

Long-term sustainability of AI systems

Models that can be understood and audited are easier to maintain, update, and adapt over time. XAI reduces technical debt by preventing systems from becoming opaque and unmanageable.

Use cases for explainable AI

Explainability is increasingly essential across industries, especially where AI decisions impact individuals or carry legal risk.

Healthcare

- Diagnostic support systems – Explainable AI helps clinicians understand which clinical indicators or patterns influenced a diagnostic suggestion, supporting safer and more informed medical decisions.

- Medical imaging (e.g., MRI, CT scan analysis) – XAI can highlight image regions that contributed to a diagnosis, allowing radiologists to validate AI findings and reduce the risk of false positives or missed conditions.

- Treatment recommendation models – XAI can highlight image regions that contributed to a diagnosis, allowing radiologists to validate AI findings and reduce the risk of false positives or missed conditions.

- Resource optimisation and triage – Transparent models help hospitals understand how AI prioritises patients or allocates resources, ensuring decisions are fair, clinically justified, and ethically sound.

Financial services

- Loan approvals and credit scoring – Explainable AI clarifies which financial factors influenced approval or rejection, supporting fairness, customer transparency, and compliance with financial regulations.

- Fraud detection and AML – XAI helps analysts understand why transactions are flagged as suspicious, improving investigation efficiency and reducing false positives.

- Portfolio management – Explainable models reveal how risk, market signals, or asset characteristics influence investment decisions, supporting informed portfolio adjustments.

- Insurance risk assessments – Transparency allows insurers to justify pricing and risk classifications, increasing trust and enabling clearer communication with customers and regulators.

Criminal justice

- Recidivism prediction tools – Explainable AI exposes which factors contribute to risk scores, helping authorities identify and mitigate bias in high-impact judicial decisions.

- DNA and forensic analysis – XAI supports transparency in automated pattern recognition, allowing experts to validate findings and maintain evidentiary integrity.

- Resource allocation and crime forecasting – Explainability makes it possible to assess whether predictions rely on legitimate signals rather than biased historical data.

Autonomous vehicles

- Explaining navigation decisions – Explainable AI helps engineers and regulators understand why a vehicle chose a specific path or action in complex environments.

- Diagnosing system failures – XAI supports root-cause analysis by revealing which inputs or conditions triggered unsafe or unexpected behaviour.

- Increasing safety and user trust – Transparent decision-making improves public confidence and supports certification and regulatory approval processes.

Cybersecurity

- Alert rationale in intrusion detection – Explainable AI shows why an alert was triggered, helping analysts distinguish real threats from benign anomalies.

- Threat scoring – XAI clarifies how risk levels are calculated, enabling better prioritisation and response planning.

- Behavioural analytics – Explainability helps teams understand abnormal behaviour patterns and reduce false alarms.

Marketing and Sales

- Customer segmentation strategies – XAI reveals which attributes define segments, enabling more accurate and ethical targeting.

- Recommendation engines – Explainable recommendations help businesses understand why products or content are suggested, improving relevance and transparency.

- Predictive scoring for campaigns – XAI clarifies which factors drive engagement predictions, supporting fair and data-driven marketing strategies.

Education

- Personalised learning systems – Explainable AI helps educators understand how learning paths are adapted to individual students.

- Student performance prediction – Transparency allows teachers to assess whether predictions are based on meaningful academic indicators rather than biased proxies.

- Dynamic curriculum planning – XAI supports informed curriculum adjustments by revealing which learning patterns influence outcomes.

Real Estate

- Property valuation models – Explainable AI shows how factors like location, size, and market trends influence price estimates.

- Investment risk scoring – Transparency helps investors understand the drivers behind risk assessments and make better-informed decisions.

Which companies use explainable AI?

Leading technology companies treat XAI as a core part of their AI strategy.

- Google uses XAI in medical imaging, vision models, and text-to-image systems to provide insights into how predictions are formed.

- Apple includes explainability tools within Core ML to help developers detect bias and analyse mobile-deployed models.

- Microsoft Azure Machine Learning includes interpretable models like Explainable Boosting Machines and tools to generate SHAP-based insights.

- Anthropic – a leader in explainable AI (XAI) research. Considers XAI crucial for building safe, reliable, and transparent AI systems. The company invests heavily in a field called mechanistic interpretability to understand how its models make decisions.

These companies are shaping industry standards for transparent and trustworthy automation.

Conclusions

Explainable AI is essential for building systems people can trust. It increases transparency, improves fairness, supports compliance, reduces risk, and strengthens the human-AI relationship. As adoption continues to grow, organisations that prioritise explainability today will lead the future of responsible and effective AI.

Need a custom solution? We’re ready for it.

IDENTT specializes in crafting customized KYC solutions to perfectly match your unique requirements. Get the precise level of verification and compliance you need to enhance security and streamline your onboarding process.